Something in Team Creation Was Confusing Users

87% of users reached the Create Team page, but only 19% moved forward to payment — a 68-point drop at a single screen.

How I Investigated

I combined three research methods to understand what was happening and why.

Heatmap Analysis

Mapped tap density across the Create Team screen. Revealed where users were tapping — and where the UI wasn’t responding.

Session Recordings

Watched real user sessions frame by frame. Identified repeated patterns of confusion and rage-tapping on disabled elements.

Follow-up Interviews

Spoke directly with users who abandoned. Their frustration pointed to the same invisible rule every time.

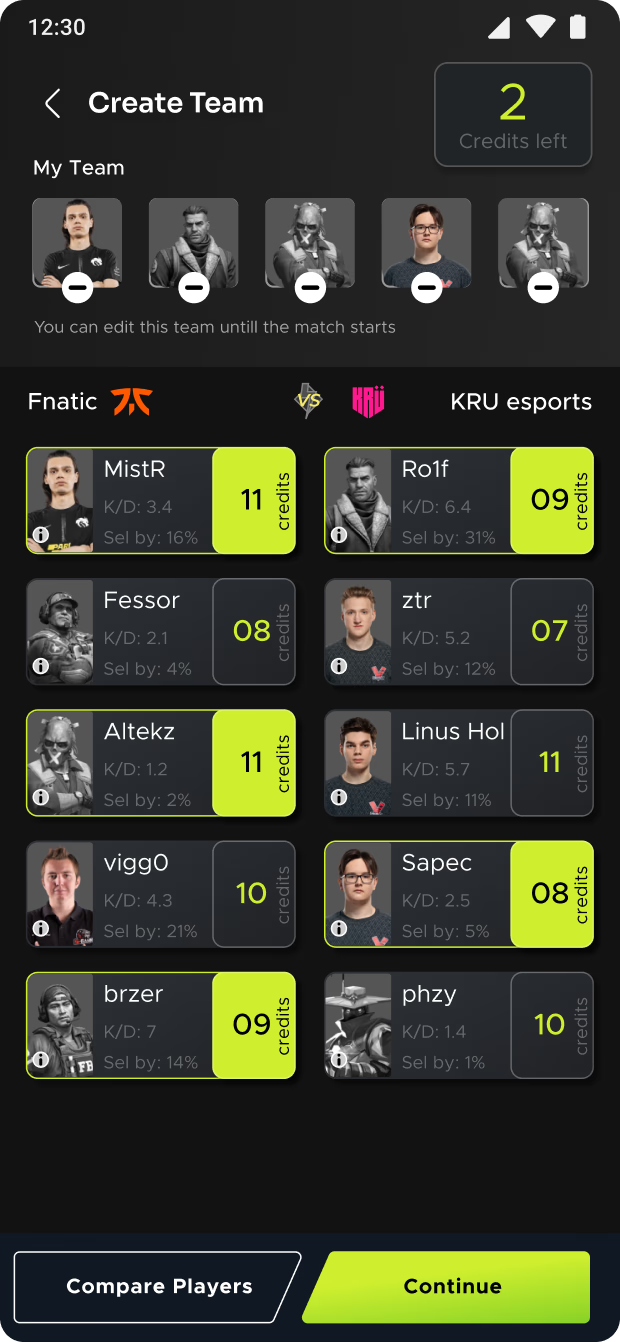

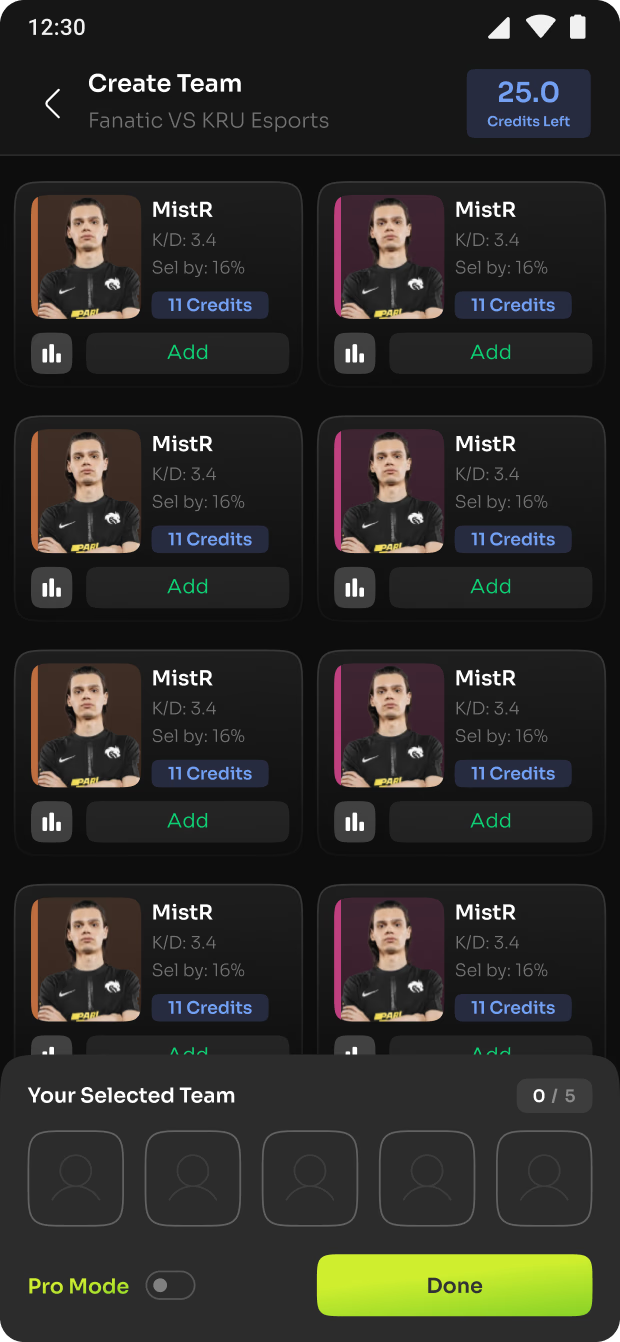

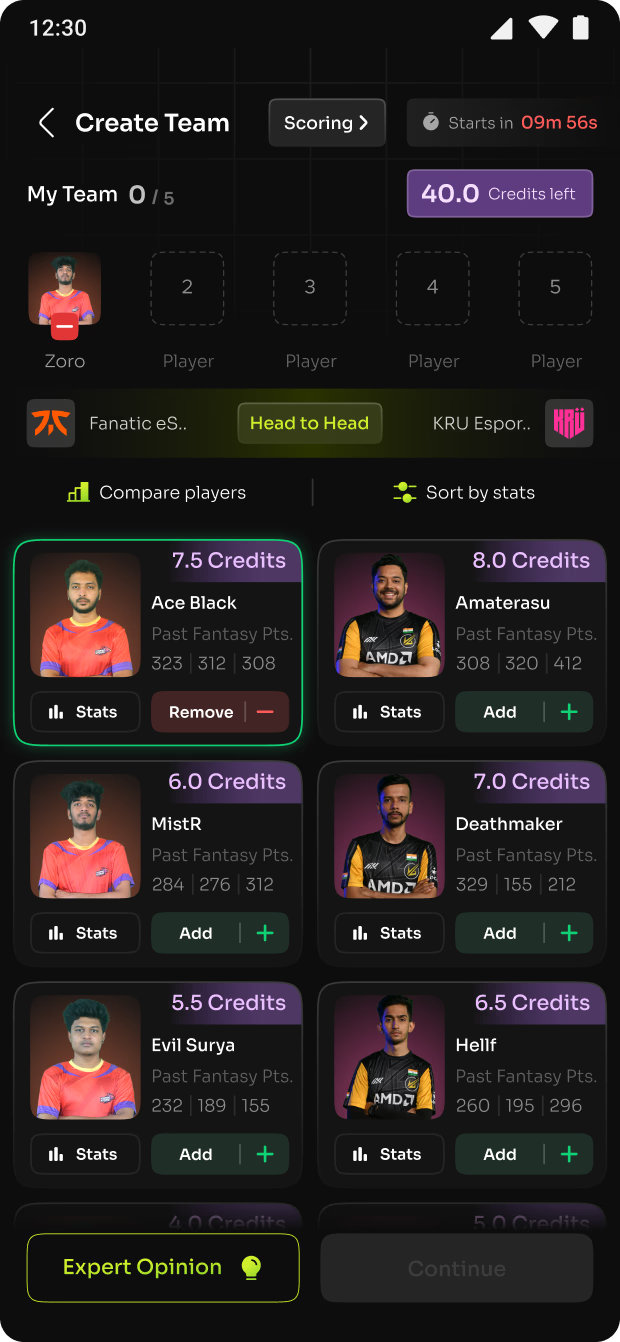

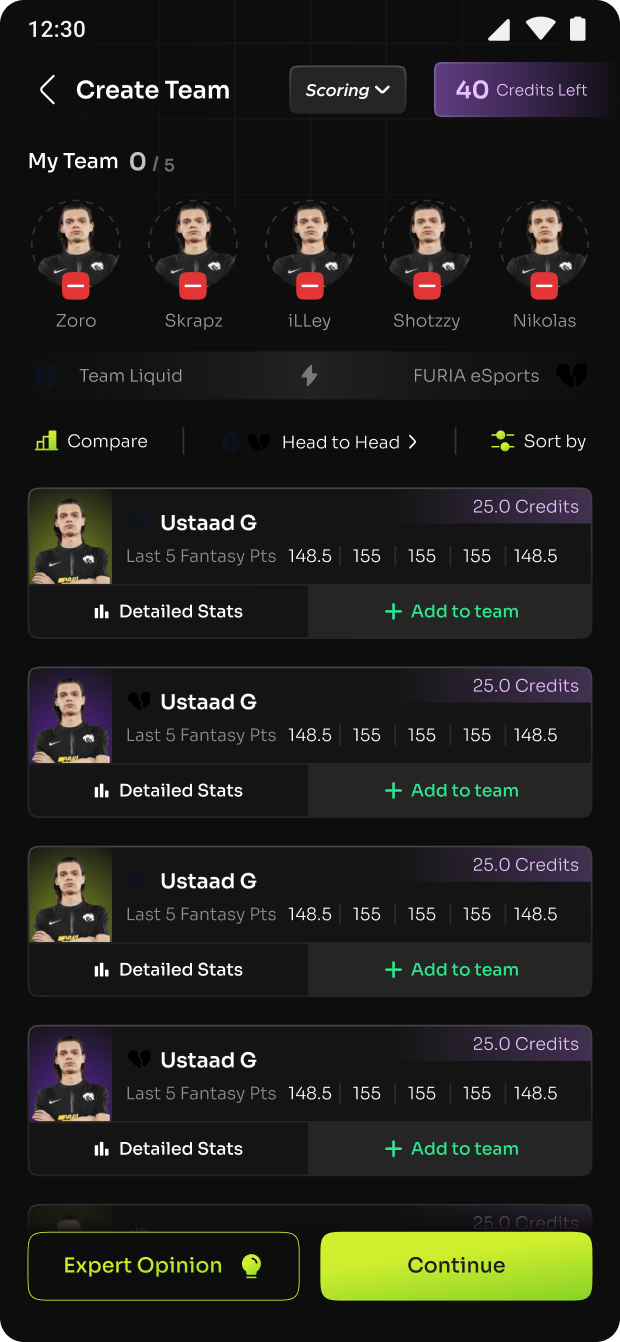

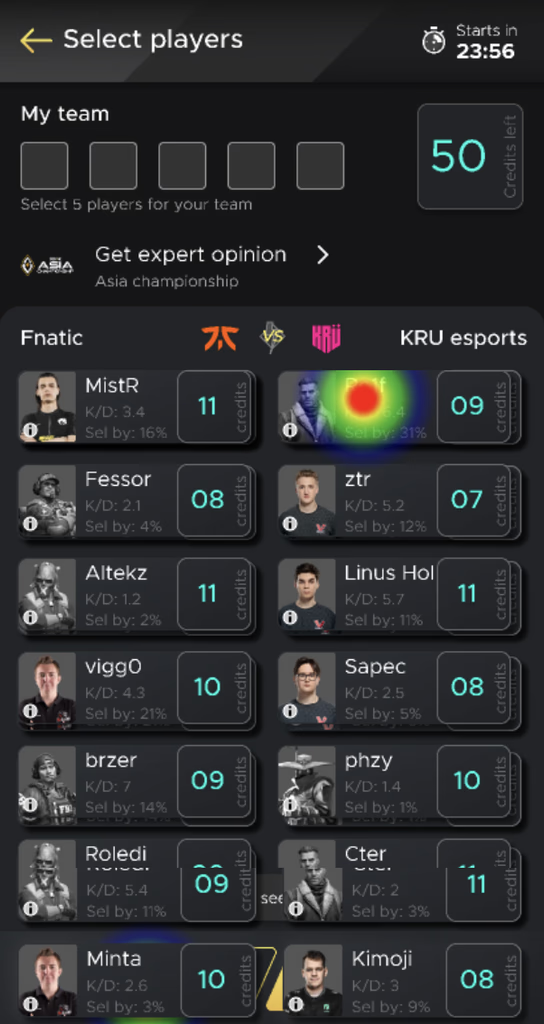

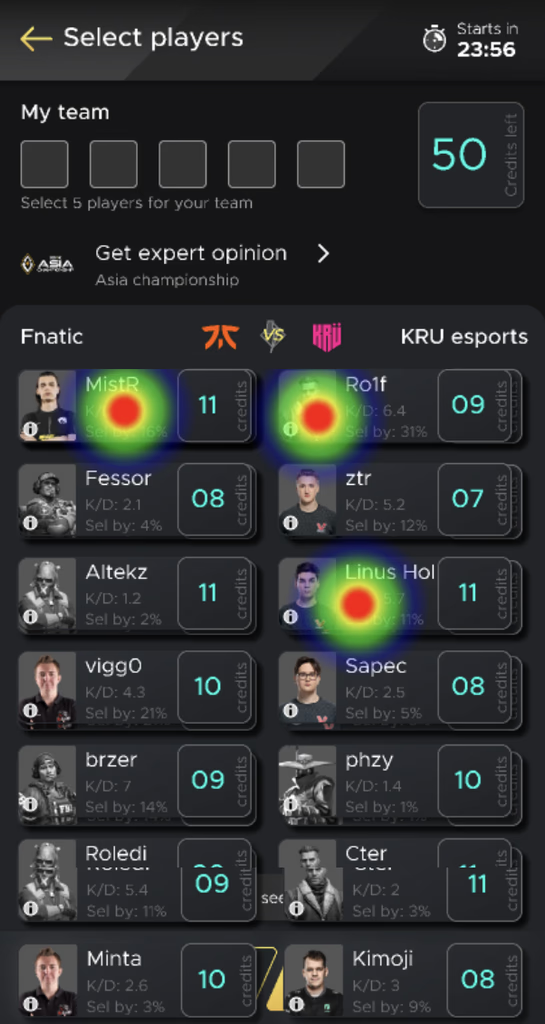

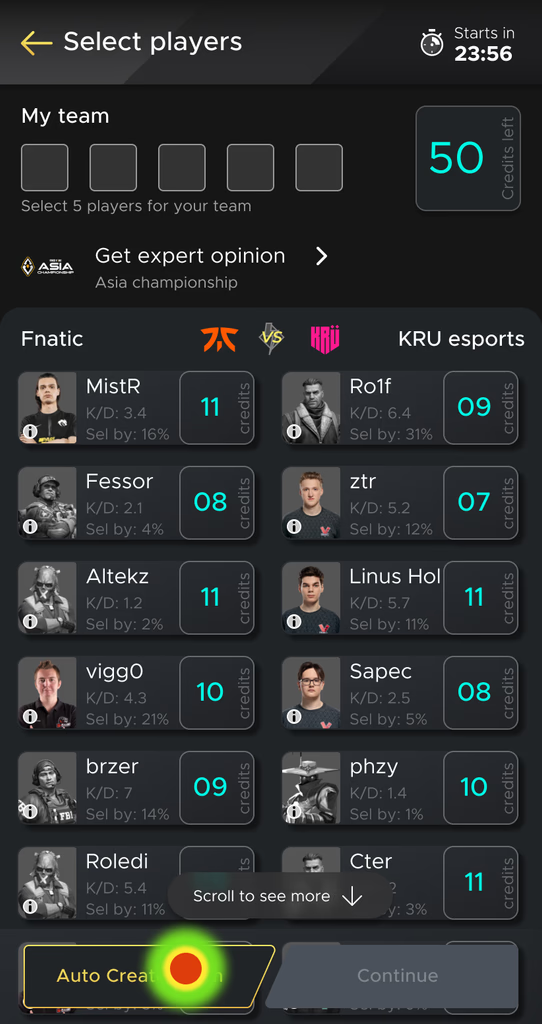

Heatmaps and session recordings showed where the confusion happened. Users were tapping disabled player cards repeatedly, checking stats pages for answers, and scrolling without finding the rule.

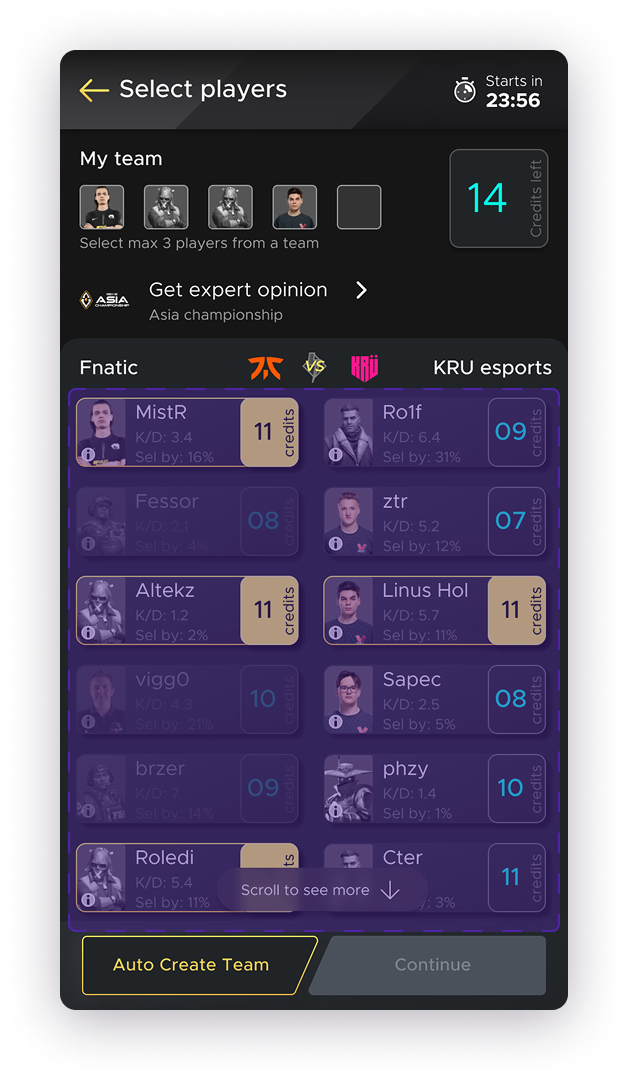

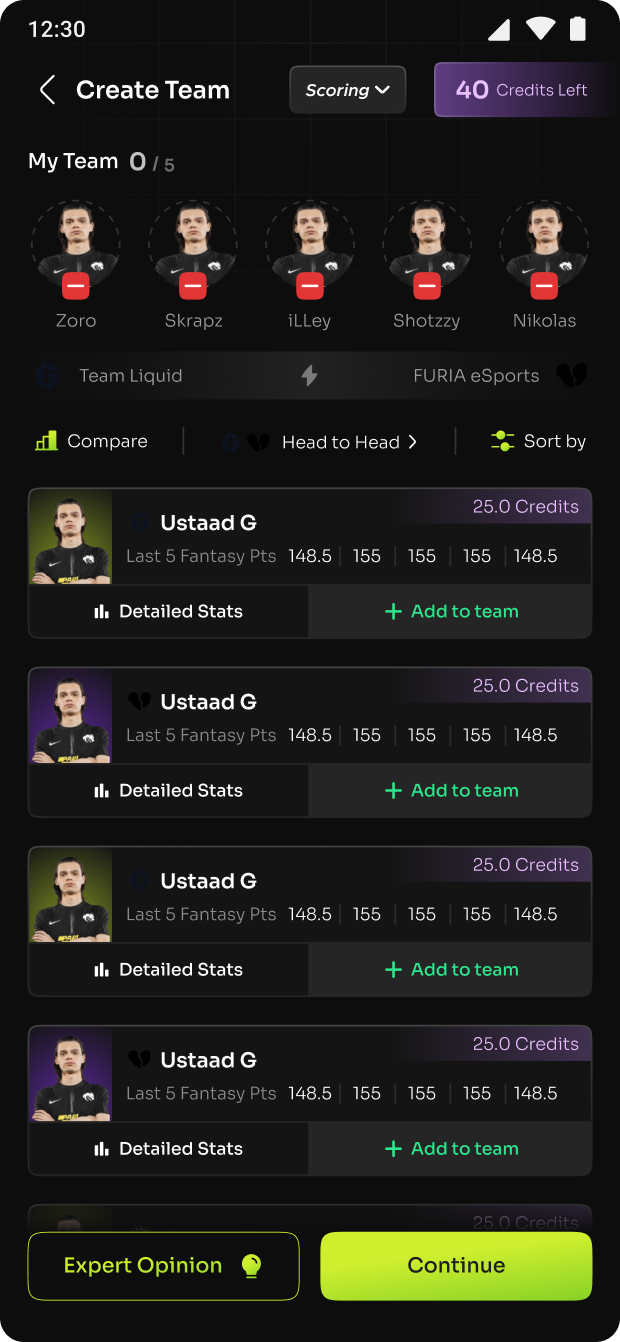

Heatmaps and session recordings from the original Create Team screen

rate

duration

success

bounce